Your marketing team just spent three weeks debating whether the new homepage should say “Start Your Free Trial” or “Get Started Today.” Meanwhile, your product team is arguing about button colors, and leadership wants to know which pricing page will drive more conversions. Sound familiar?

A/B testing cuts through the noise. Instead of endless meetings and gut-feeling decisions, you split your audience, test both versions, and let real user behavior decide. This guide explains A/B testing in full. We’ll cover the 7-step process that keeps you from wasting time, show you A/B examples, and explain how to scale testing across your org.

Try monday work managementKey takeaways

- Test one variable at a time to get reliable results: Change only headlines, buttons, or images — never multiple elements together — so you know exactly what drives performance improvements.

- Run tests for full business cycles regardless of early results: Wait 2-4 weeks to account for weekly patterns and reach proper sample sizes, even when one version seems to be winning immediately.

- Focus testing on high-traffic, high-value pages first: Prioritize homepage headlines, checkout flows, and email subject lines where small improvements create the biggest revenue impact.

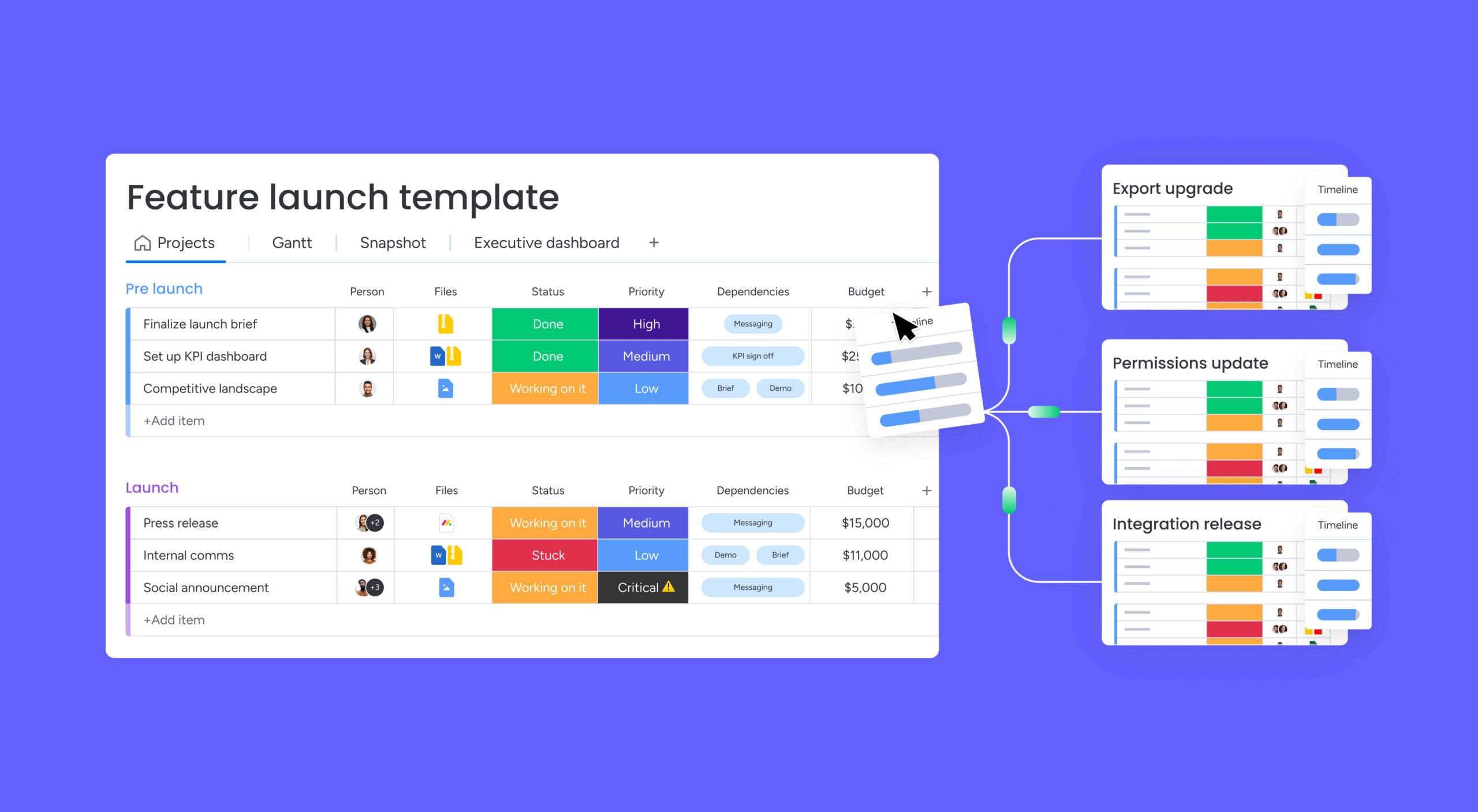

- Coordinate complex testing programs with monday work management: Centralize test planning, automate team notifications, and track results through real-time dashboards that connect strategy to execution across departments.

- Build hypotheses from real data, not assumptions: Structure predictions as “If we change X, then Y will improve because Z” using actual user behavior insights rather than gut feelings.

What is A/B testing?

A/B testing is a method for comparing two versions of something to determine which achieves a higher performance against a specific goal.

You show version A to one group and version B to another group, then measure which drives stronger results. Think of it as running a controlled experiment where you test a specific change against your current approach to validate decisions with real data.

A/B testing connects data to user experience through conversion rate optimization. A marketing team might test whether a “Get a Demo” button drives better conversion rates than “Contact Sales.” Rather than debating which messaging works, the team splits traffic equally and measures actual conversion rates. This approach proves what’s worth building before you spend the budget.

Understanding A/B testing fundamentals

The validity of an A/B test depends on four core components that work together to produce trustworthy results. Get these right, and your data shows real behavior instead of noise.

- Control: Your current baseline experience that serves as the benchmark

- Variation: A single specific change you introduce to test your hypothesis

- Random assignment: The mechanism that gives every user an equal chance of seeing either version

- Statistical measurement: The analysis that determines whether performance differences are real or due to chance

Random assignment makes A/B testing reliable. Evently distributing factors like time of day or user demographics across both groups allows you to attribute any performance difference directly to the change being tested rather than external factors.

How A/B testing works in practice

A successful A/B test follows a structured workflow designed to produce reliable data.

- Hypothesis formation: Identify a problem and predict a solution based on data or user insights.

- Audience splitting: Divide incoming traffic between control and variation groups.

- Simultaneous testing: Run both groups at the same time to create consistent conditions.

- Data collection: Track user interactions against defined metrics throughout the test.

- Statistical analysis: Determine if the performance difference is statistically significant, not just random chance.

Warning: Running tests simultaneously is essential; sequential testing introduces time-based variables like seasonality that corrupt the data.

A/B testing vs. multivariate testing

A/B testing compares two versions, while multivariate testing considers multiple changes at once. Your choice depends on traffic volume and the complexity of what you’re testing.

| Feature | A/B testing | Multivariate testing |

|---|---|---|

| Variables tested | One major variable at a time | Multiple variables tested simultaneously |

| Traffic requirement | Low to moderate | High volume required |

| Complexity | Low; easy to design and analyze | High; requires more advanced setup and analysis |

| Primary use | Validating broad changes or individual elements | Optimizing combinations of multiple elements |

| Time to result | Faster due to fewer variations | Slower due to traffic being split across many variations |

How does A/B testing drive business growth?

A/B testing drives revenue and makes teams more efficient. The impact extends beyond conversion rate optimization, fundamentally changing how businesses allocate capital, manage risk, and develop competitive advantage.

Make data-driven decisions

Testing eliminates the “Highest Paid Person’s Opinion” phenomenon, where strategy gets dictated by hierarchy rather than reality. When a retail company tests a new pricing strategy, the decision to implement is based on actual customer willingness to pay, not an executive’s gut feeling.

This applies across functions:

- Product teams test feature adoption rates

- Marketing teams validate campaign messaging effectiveness

- Sales teams optimize outreach scripts and conversion tactics

When you test, the best idea wins, regardless of who came up with it.

Reduce risk and maximize ROI

Launching a major site redesign or new product feature to your entire audience carries significant risk. A/B testing is your safety net. Rolling out changes to a small percentage of users first, allowing you to identify negative impacts before they affect your entire bottom line.

You only invest in what works. A checkout flow change that increases abandonment rates can be caught and reversed before it costs millions in lost revenue.

Build a culture of continuous improvement

When you test systematically, your team learns faster. Even “failed” tests provide value by revealing what customers don’t want, which is just as critical as knowing what they do want. This culture encourages experimentation and removes the fear of failure.

When teams know they can test hypotheses with limited risk, they’re more likely to propose innovative solutions. Over time, these small wins add up to an edge your competitors can’t copy.

Try monday work management5 key benefits of A/B testing

A/B testing drives growth and makes your operations stronger and strategy sharper. The more you test, the better you get at it.

- Quantifiable impact measurement: Testing shows you exactly how much each change is worth. Instead of assuming a new landing page is “stronger,” teams report that it generates specific monthly recurring revenue increases.

- Reduced implementation risk: Big changes break things you didn’t expect. Testing catches these problems before they hit everyone.

- Enhanced customer understanding: Testing shows what users do, not what they say they’ll do in surveys. Watch how users interact with different layouts, and you’ll learn what really drives their behavior.

- Improved team alignment: Data settles arguments between teams. When design and marketing can’t agree, run a test. Problem solved.

- Accelerated learning cycles: Test fast, learn fast. You can test new ideas every week instead of waiting months.

How to run A/B tests: 7-step process

A structured process keeps your tests reliable and repeatable. Work management platforms keep developers, designers, and analysts in sync so tests keep moving. Stick to this process, and you won’t waste time on broken tests.

Step 1: Set testing goals

Start every test with a clear goal tied to a key metric. Vague goals like “improve engagement” lead to inconclusive results.

Instead, use stronger goals that are more precise, like: “Increase the click-through rate on the demo request button by 10%.”

Get everyone to agree on the metric upfront. Otherwise, someone will change it halfway through. With monday work management, you track goals and milestones in one place so everyone stays on the same page.

Step 2: Develop your hypothesis

A hypothesis is a prediction rooted in insight, structured as an “If… then… because…” statement. For example: “If we remove the navigation bar from the checkout page, completion rates will increase because users have fewer distractions.”

Good hypotheses come from data, research, or psychology. Instead of basing your strategy on guesswork, determine what evidence supports your current hypothesis.

Step 3: Calculate required sample size

Statistical power tells you how many users you need before you can trust the results. Run a test without enough traffic, and you’ll get bad data.

Instead, use a sample size calculator, for which you’ll need three things:

- Current conversion rate: Your baseline performance metric

- Minimum detectable effect: The smallest change worth detecting

- Desired statistical confidence level: Usually set at 95%

Step 4: Create test variations

Keep your test tightly focused. Change too many things at once, and you won’t know what worked.

So, test one thing at a time. For example, if you’re testing a landing page, change only the headline — not the headline, button color, and form fields simultaneously. Get design and engineering working together to make sure the variation works on every device and browser.

Step 5: Launch your test

When you launch, you’ll configure the testing platform and segment your audience. Run through a pre-flight checklist to make sure tracking works and traffic splits evenly.

Running a complex test with multiple teams? monday work management gives you one place where marketing, product, and engineering can sync on timing. Automations alert the right people when tests launch, so teams don’t accidentally mess with each other’s experiments.

Step 6: Monitor performance

Once your test is live, watch for bugs, not early wins. If conversions tank right away, something’s broken. Don’t stop the test just because one version looks like it’s winning.

Be patient. You need to account for weekly patterns and hit your sample size. Real-time dashboards let you track how tests are doing without jumping to conclusions.

Step 7: Analyze and act on results

Analysis goes beyond checking which version “won.” Check if the results are statistically significant and if the improvement is worth the effort to roll out.

Roll out the winner to everyone. If the test was inconclusive, document what you learned. Finish by updating your knowledge base so future tests are smarter. With monday workdocs, you can save test results, methods, and takeaways in one searchable place to make your future tests even smarter.

Try monday work managementWhat to A/B test for maximum impact

Focus your testing efforts where they’ll drive the most revenue. High-traffic touchpoints across your marketing, product, and sales operations offer the biggest opportunities. Start with these areas, and you’ll see measurable wins fast.

Website and landing pages

The “above the fold” area of websites offers the highest potential for impact. Headlines decide if users stick around or leave, while call-to-action buttons should be tested for copy, color, and placement.

Forms are another big win for testing. Each extra field you add makes people less likely to finish; test a short 3-field form against a longer 7-field one, and you’ll see a huge difference in how many people submit.

Also test your value proposition. Do people care more about saving time, saving money, or getting better features? Find your highest-traffic page that you haven’t tested in 6 months. That’s usually where you’ll find the easiest wins.

Email marketing campaigns

Email A/B testing is a great chance to play around with your subject lines, which can make or break your open rates. Testing questions against statements or short copy against long can flip your results completely.

Inside emails, it’s also worth testing sender names and send times for open rates. Meanwhile, personalization and button placement are optimized for click-through rates, which you can track through email marketing analytics. Segmentation is key here, as different audiences may respond differently to the same variables in email marketing campaigns.

Product features and UX

Product feature testing focuses on two critical metrics: initial adoption and long-term retention. Your onboarding flow is the first place to test. A streamlined 3-step onboarding might increase signup completion rates by 25%, but if users don’t understand core functionality, you’ll see higher churn within 30 days. Test the trade-off between speed and education to find the optimal balance.

Feature discoverability directly impacts usage rates. Navigation placement tests reveal how users find functionality. When you move a high-value feature from a nested submenu into primary navigation, usage typically jumps 40-60% because users no longer need to hunt for it. Test information architecture changes like sidebar positioning, menu categorization, and search functionality to understand how placement affects feature adoption across different user segments.

Pricing and promotional offers

Pricing tests carry higher stakes than most experiments because they directly impact revenue and brand perception, but the potential returns justify the risk.

Start with presentation formats: does “$99/month” convert better than “$1,188/year (save 20%)”? The way you display identical pricing can also shift conversion rates by 15-30%. From there, test tier structures — a three-tier “Basic,” “Professional,” and “Enterprise” model performs differently than a two-tier approach.

Test discount framing too, since “Save $200” hits differently than “20% off” even when the savings are identical. Anchoring techniques also work: displaying a $299/month enterprise plan alongside your $99/month standard plan makes the standard option feel more accessible without seeming cheap.

How to avoid common A/B testing pitfalls

Even when you mean well, mistakes can ruin your data and lead to bad calls. Save time by being aware of the following.

- Testing too many variables simultaneously: Change headlines, images, and buttons all at once? You won’t know what made the difference.

- Stopping tests too early: Stop a test the second it hits significance, and you’ll probably get a false positive. Let tests run for the full planned time to catch weekly patterns.

- Ignoring statistical significance: Making decisions based on “directional” data that hasn’t reached significance is essentially guessing.

- Testing without sufficient traffic: Test a low-traffic page, and you might wait months for results. By then, the market’s changed.

- Not accounting for external factors: Run tests during Black Friday or a PR crisis? Your data will be off. People don’t act normal during these times.

Scale A/B testing across your organization with monday work management

If you’re running heaps of tests, you’ll need a system that keeps people, processes, and data in sync. monday work management transforms A/B testing by serving as the operational backbone for the entire testing lifecycle. Teams utilize boards to map out testing roadmaps, storing hypotheses, design assets, and technical requirements in one accessible location. Here’s how monday work management becomes a single source of truth for your next A/B testing campaign.

Automate testing workflows to reduce manual work

The platform reduces operational friction through powerful automations. When test status changes to “Live,” automations instantly notify the analytics team to begin monitoring. When tests conclude, the system triggers tasks for strategists to document results.

This keeps your testing program moving without constant manual check-ins or status updates slowing things down.

Let AI handle the heavy lifting

monday AI takes testing efficiency further with intelligent assistance. AI Blocks categorize test results and summarize lengthy analysis documents, allowing teams to focus on strategy rather than administration.

monday sidekick acts as your testing copilot, answering questions like “Which tests are running this week?” or “What was our win rate last quarter?” without digging through boards. The agentic AI can even proactively suggest next steps based on test outcomes, keeping your program moving forward.

Track tests with real-time dashboards

Customizable dashboards provide high-level views of testing program health. Executives view portfolio summaries showing active test numbers and cumulative revenue impact, while product managers drill down into specific experiment performance.

These visual reports aggregate data from multiple sources, providing visibility into win rates and velocity without manual report generation.monday sidekick can generate instant dashboard summaries on demand, translating complex data into plain-language insights that drive faster decision-making.

Enable cross-team collaboration

Successful testing requires input from design, copy, development, and data teams. monday work management facilitates this through shared workspaces and item-level communication.

Feedback on variation designs happens directly on files within the platform, preserving context. monday workdocs allow teams to co-author test plans and result analyses in real-time, breaking down departmental silos and fostering collaborative experimentation culture. Meanwhile, monday sidekick can draft initial test hypotheses or summarize stakeholder feedback threads, accelerating the collaborative process and avoiding anything being lost in translation.

Transform your testing program into a competitive advantage

Teams that master systematic A/B experimentation make faster, more confident decisions while reducing the risk of costly mistakes. The most successful organizations treat testing as a core competency, not an occasional tactic. They invest in proper tools, processes, and training to validate every hypothesis with real data.

Ready to scale your testing program? monday work management provides the operational foundation to coordinate complex experiments across teams, automate routine workflows, and turn insights into action. Start building your experimentation advantage today.

Try monday work managementFAQs

What is the minimum traffic needed for A/B testing?

The minimum traffic needed for A/B testing depends on your baseline conversion rate and desired detectable effect, but reliable tests typically require thousands of visitors per variation to reach statistical significance within a reasonable timeframe.

How long should I run an A/B test?

You should run an A/B test for a minimum of one to two full business cycles, usually 2-4 weeks, to account for day-of-week variances regardless of how quickly statistical significance is reached.

Can you run multiple A/B tests simultaneously?

Yes, you can run multiple A/B tests simultaneously, but it requires careful audience segmentation and coordination to prevent tests on different page elements from interfering with each other's data.

What does statistical significance mean in A/B testing?

Statistical significance in A/B testing, typically set at 95%, indicates the probability that the performance difference between variations is caused by the changes made rather than random chance.

How much does A/B testing typically cost?

A/B testing costs vary significantly — basic testing can be done with free platforms and internal time, while enterprise-scale programs involving advanced software and dedicated agencies can cost thousands to tens of thousands of dollars monthly.

How does monday work management help coordinate A/B testing across teams?

monday work management acts as a centralized operating system that organizes test planning, automates approval workflows, provides real-time visibility into testing roadmaps, and connects cross-functional teams for seamless collaboration.