An organization scaling AI without a strategy is like an orchestra where every musician plays a different song. The marketing team’s violin plays a beautiful solo, operations has a powerful drumbeat, and IT’s brass section is flawless. Each part sounds great in isolation, but together, it’s just noise. Most companies find themselves in this exact spot. They’ve got AI experiments scattered across departments, each delivering real value in isolation, but no coordinated strategy to scale those wins company-wide. The gap between “AI pilot success” and “enterprise-wide AI adoption” isn’t about technology limitations. It’s about organizational readiness, cross-departmental coordination, and having the governance foundation to scale AI safely across teams.

This guide breaks down the 7-step framework for turning scattered AI experiments into a coordinated adoption strategy. You’ll learn how to assess readiness, prioritize the right use cases, build the data foundation AI actually needs, and set up governance that accelerates adoption instead of slowing it down. You’ll also see how platforms that centralize work across teams create the visibility and coordination that makes enterprise AI adoption possible, including solutions like monday agents that integrate directly into existing workflows.

Key takeaways

- Start with business outcomes, not AI features: Identify 3-5 strategic objectives your organization already prioritizes, then map specific workflows where AI can deliver measurable impact on those goals.

- Build your data foundation before scaling AI: Consolidate scattered departmental data into one connected system with standardized formats so AI can access and use information across teams.

- Design governance from day one, not after deployment: Establish granular permissions, audit trails, and human oversight checkpoints before scaling AI to avoid compliance gaps that force costly rollbacks.

- Deploy AI agents where teams already work: monday agents integrate directly into existing workspaces, handling tasks like risk analysis and status reporting without forcing teams to learn separate systems.

- Invest in people alongside technology: Provide role-specific training and redesign workflows around human-AI partnerships to ensure adoption compounds in value rather than stalling after initial rollout.

What is an AI adoption strategy?

An AI adoption strategy is your blueprint for choosing, rolling out, scaling, and governing AI across the organization. It connects your tech spending to actual business goals, team capabilities, and compliance needs. It’s what turns scattered AI experiments into something that actually works across your entire company.

This differs fundamentally from “using AI.” Plenty of teams use AI: they generate content with a chatbot, summarize meetings with an assistant, or automate a single workflow.

An AI adoption strategy is about changing how your organization works, not just installing new tech.

It tackles how AI changes roles, workflows, decisions, and how teams work together across departments.

An AI adoption strategy covers five areas that need to work together:

- Identifying value: Pinpointing where AI creates the most business value based on strategic priorities

- Preparing infrastructure: Ensuring data and systems can support AI at scale

- Upskilling people: Building AI fluency across every level of the organization

- Establishing governance: Creating policies that enable speed without sacrificing security

- Measuring outcomes: Proving and compounding ROI over time

Why most AI pilots never reach production

Most companies have tried AI by now, so why aren’t more seeing results across the whole organization? The problem isn’t the technology, but everything else: how teams coordinate, how data connects, and whether governance exists. According to McKinsey, only about one-third of organizations report they have begun to scale AI across the enterprise, and just 39% report any enterprise-level EBIT impact attributable to AI. The hard part is scaling them across departments with real governance and measurable results.

The gap between experimentation and enterprise-wide AI

“Pilot purgatory” is the state where organizations launch promising AI experiments that never graduate to broader use. A marketing team deploys AI for content generation and sees real-time savings, or an operations group automates weekly status reports and frees up hours. Those wins stay locked inside one department, invisible to the rest of the organization, with no plan to extend the results.

Here’s what pilot purgatory looks like:

- Siloed experiments: No visibility across departments into what’s working

- Undefined success criteria: ROI becomes impossible to demonstrate

- Data fragmentation: AI models trained on one department’s data can’t access information from other teams

Where organizations get stuck preparing for AI adoption

The real barriers to scaling AI are organizational, cultural, and technical. Most AI projects need input from IT, operations, legal, HR, and business teams, but these groups rarely work in the same place where they can actually sync up, track progress, and hand things off smoothly.

Culture creates just as many roadblocks:

- Role displacement fears: Team members fear AI will replace their roles rather than augment their capabilities

- Passive resistance: Leading to slower adoption and reduced effectiveness

- Governance gaps: Without policies for data privacy, bias monitoring, and compliance, legal and security teams block broader rollouts as a precaution

AI adoption strategies vs. AI implementation plans

People use these terms interchangeably, but they mean different things. Mix them up and you’ll either over-plan individual projects or under-plan for the whole organization.

| Dimension | AI adoption strategy | AI implementation plan |

|---|---|---|

| Focus | Why and where to adopt AI across the organization | How to deploy a specific AI solution |

| Scope | Enterprise-wide, cross-departmental | Project-specific or department-specific |

| Timeframe | 12–36 months | Weeks to months per initiative |

| Owned by | Executive leadership and cross-functional steering committee | Project managers and technical leads |

| Success metric | Organization-wide AI maturity and business impact | On-time, on-budget delivery of a specific AI capability |

A solid AI adoption strategy generates multiple implementation plans as you scale. Think of the strategy as a city’s transportation plan that shows which routes need coverage and how everything connects. Each implementation plan is the blueprint for building a specific highway or rail line within that master plan.

How to assess your organization's AI readiness

Before you invest in scaling AI, figure out where your organization actually stands today. Readiness comes down to three things: data, people, and governance. If any one of these is weak, your entire AI effort can stall.

1. Evaluate data readiness and infrastructure

Data readiness means your data is accessible, structured, accurate, and connected across departments so AI can actually use it. For most companies, that data is scattered across dozens of disconnected systems.

Most companies don’t lack data. The problem is that it’s scattered across departments in different formats with nothing connecting it. Gartner research confirms this: data availability and quality are cited as top AI implementation challenges by 34% of leaders in low-maturity organizations and 29% in high-maturity ones. Organizations should evaluate:

- Data centralization: Is information consolidated or scattered across systems?

- Format consistency: Do departments use standardized naming conventions and structures?

- Infrastructure readiness: Is the system cloud-ready with robust API connectivity?

2. Assess workforce skills and AI fluency

This is where most companies underestimate the challenge. AI fluency isn’t about building AI models. It’s whether your organization understands what AI can do, knows where it adds value, and can work confidently with AI-powered workflows.

Key workforce readiness factors include:

- Executive fluency: Setting realistic expectations and strategic direction

- Manager fluency: Redesigning workflows and team processes

- Team fluency: Daily comfort with AI-assisted processes

- Specialist skills: Configuring and refining AI systems

3. Review governance and compliance foundations

Set up governance before you scale, not after. Companies that deploy AI first and add governance later discover compliance gaps that force costly rollbacks. Good governance speeds up adoption by clearing the objections that usually slow things down. The governance foundations organizations need include:

- Data privacy policies: Protecting sensitive information

- Granular access controls: Defining permissions and data access

- Audit trails: Recording every AI action for accountability

- Human-in-the-loop protocols: Ensuring oversight and validation

- Regulatory compliance alignment: Meeting industry standards

7 steps to scale AI from pilot to production

Follow these steps in order, but expect to loop back as you learn and as AI evolves. You’re not deploying AI once and walking away. You’re managing a transformation that gets more valuable over time.

Step 1: Align AI initiatives with business goals

Start with business outcomes, not what the technology can do. The question isn’t “What can AI do?” but “What business problems are we trying to solve, and can AI help?”

Key actions include:

- Identify strategic objectives: Focus on 3–5 business objectives the organization is already committed to

- Map workflows: Connect each objective to specific workflows where AI could contribute

- Secure executive sponsorship: Link AI initiatives to measurable business KPIs

- Establish governance: Create a cross-functional steering committee

Step 2: Prioritize high-impact examples

Some AI use cases deliver more value than others. Some deliver quick wins with low risk. Others need months of data prep or come with regulatory headaches.

Start with 2–3 high-impact, lower-risk examples that can demonstrate value quickly:

- Status reporting automation: Across project portfolios

- Risk flagging: Using AI to identify project risks before deadlines slip

- Intake and triage: Deploying AI agents to handle repetitive workflows

Step 3: Build your data foundation

AI only works as well as the data it can access. This step is about building the foundation: closing the gap between where your data is now and where it needs to be.

Key actions include:

- Consolidate data sources: Into a shared, structured system

- Standardize formats: With consistent naming conventions

- Connect platforms: Through integrations and APIs

- Establish ownership: Assign specific roles responsible for data quality

Step 4: Choose the right AI technology approach

Your AI options range from building everything in-house to using AI that’s already embedded in your existing platforms. For most organizations, the key decision isn’t “which AI model is best” but “how do we get AI into the hands of every team without adding another disconnected system?”

Most companies do best with a hybrid approach, but prioritize embedded AI if you want the widest adoption. When AI lives inside the platform where teams already manage their work, the “separate system” barrier disappears.

Step 5: Design responsible AI governance from the start

Governance isn’t just a compliance box to check. It’s what lets you move fast. Companies that build governance in from day one scale faster. They skip the delays from security reviews and legal pushback later. Yet according to Deloitte, just 21% of companies report a mature model for governing autonomous AI agents, with data privacy and security cited as the most concerning AI risk by 73% of respondents.

Governance design principles include:

- Transparency: Every AI action is visible and explainable

- Granular permissions: Defining exactly which data AI can access

- Human oversight: Checkpoints where people validate AI outputs

- Complete audit trails: For accountability and compliance

- Data ownership: Ensuring the organization retains control of all content

Step 6: Redesign workflows around AI

The biggest mistake? Layering AI onto existing workflows without rethinking how work should actually flow. AI adoption is your chance to redesign workflows, not just add tech on top.

Workflow redesign principles include:

- Identify handoff points: Where work moves between people or systems

- Automate repetitive sequences: So people focus on judgment-intensive work

- Redesign collaboration: Around human-AI partnerships with defined roles

- Standardize before automating: Since AI amplifies whatever process it’s applied to

Step 7: Invest in people alongside technology

Invest in technology without investing in people, and you’ll end up with expensive shelfware. This step determines whether AI keeps getting more valuable or stalls after launch.

People-investment priorities include:

- Role-specific training: For executives, managers, and team members

- Change champions: Who can demonstrate value and coach peers

- Psychological safety: For experimentation and learning

- Continuous learning: Built into organizational rhythm

- Career path evolution: Showing how AI augments roles rather than replacing them

How to measure AI adoption ROI across teams

Measuring ROI is what separates strategic AI adoption from expensive experiments. Without metrics, you can’t prove value to leadership, justify more investment, or figure out which AI projects to scale.

Operational metrics that prove AI value

Operational metrics are the easiest to measure, which makes them the foundation of your AI ROI case. Measure these before you deploy AI so you have a baseline.

| Metric category | What to measure | How AI impacts it |

|---|---|---|

| Time savings | Hours saved per week on automated workflows | AI handles status reporting, data entry, triage, and routine communications |

| Project delivery | On-time completion rates before and after AI adoption | AI flags risks earlier, enabling proactive intervention |

| Process throughput | Volume of requests or projects processed per period | AI agents handle intake, routing, and initial responses at scale |

How to report AI results to leadership

This is where many AI projects lose steam: translating metrics into stories executives care about. Executives care about business results, not technical metrics or how many people clicked a button.

Connect metrics to business objectives by:

- Framing business impact: Report “AI-driven automation freed the equivalent of 5 full-time team members to focus on revenue-generating client work” rather than “AI saved 200 hours”

- Using live dashboards: For real-time visibility into performance, monday agents can automatically generate status reports and executive summaries that surface key metrics across departments

- Reporting by department: Show impact across different teams and examples

- Including adoption rates: Alongside performance metrics

- Highlighting compounding returns: Show how value increases over time

How agentic AI changes the adoption playbook

Agentic AI can plan, execute, and iterate on multi-step workflows on its own, not just respond to prompts. This represents a fundamental shift from “AI that assists” to “AI that acts.”

What agentic AI means for enterprise teams

Most companies have only used chatbots, copilots, and single-task automation so far. Agentic AI works differently. Give it a goal, and it breaks it into steps, pulls from multiple data sources, and delivers results.

What this means practically:

- Shifting from prompting to delegating: Teams assign goals rather than manage individual tasks

- Cross-functional execution: Agents pull data from multiple departments automatically

- 24/7 autonomous operation: Work continues without human intervention

- Scalable workforce: Multiple specialized agents operating in parallel

How people and AI agents work together

Human-AI collaboration isn’t about replacing people. It’s about people and agents working as one team, each doing what they’re best at. People bring judgment, creativity, relationships, and strategy. Agents bring speed, consistency, and the ability to process data without getting tired.

Collaboration patterns include:

- Direction and execution: People set direction while agents execute

- Insights and decisions: Agents surface insights while people make decisions

- Volume and nuance: Agents handle volume while people handle nuance

- Refinement and learning: People refine while agents learn from feedback

How to build an AI adoption plan with monday agents

So where does all this coordination actually happen? You need AI that works where your teams already are, with capabilities that deploy directly into existing workflows and governance built in from day one.

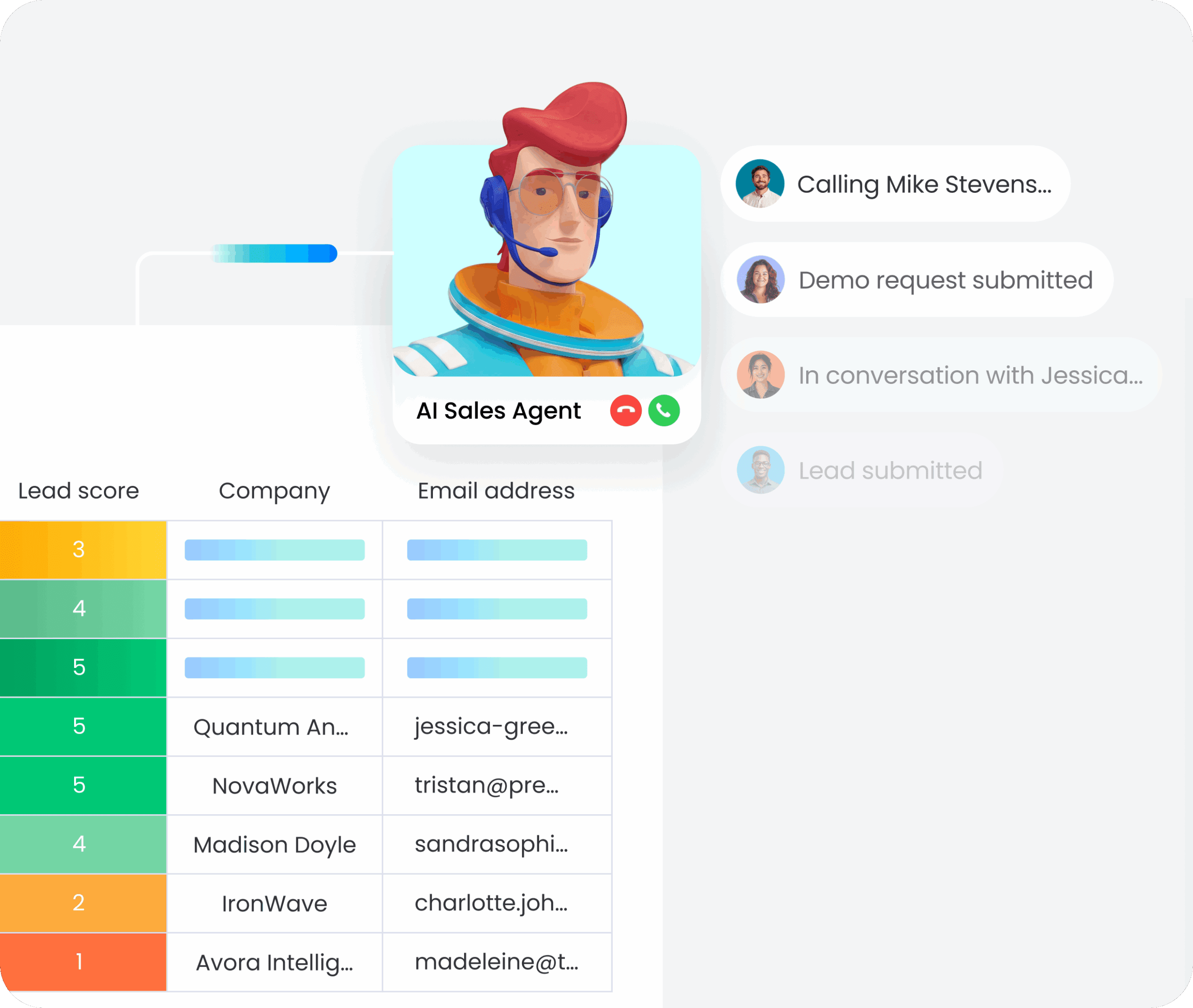

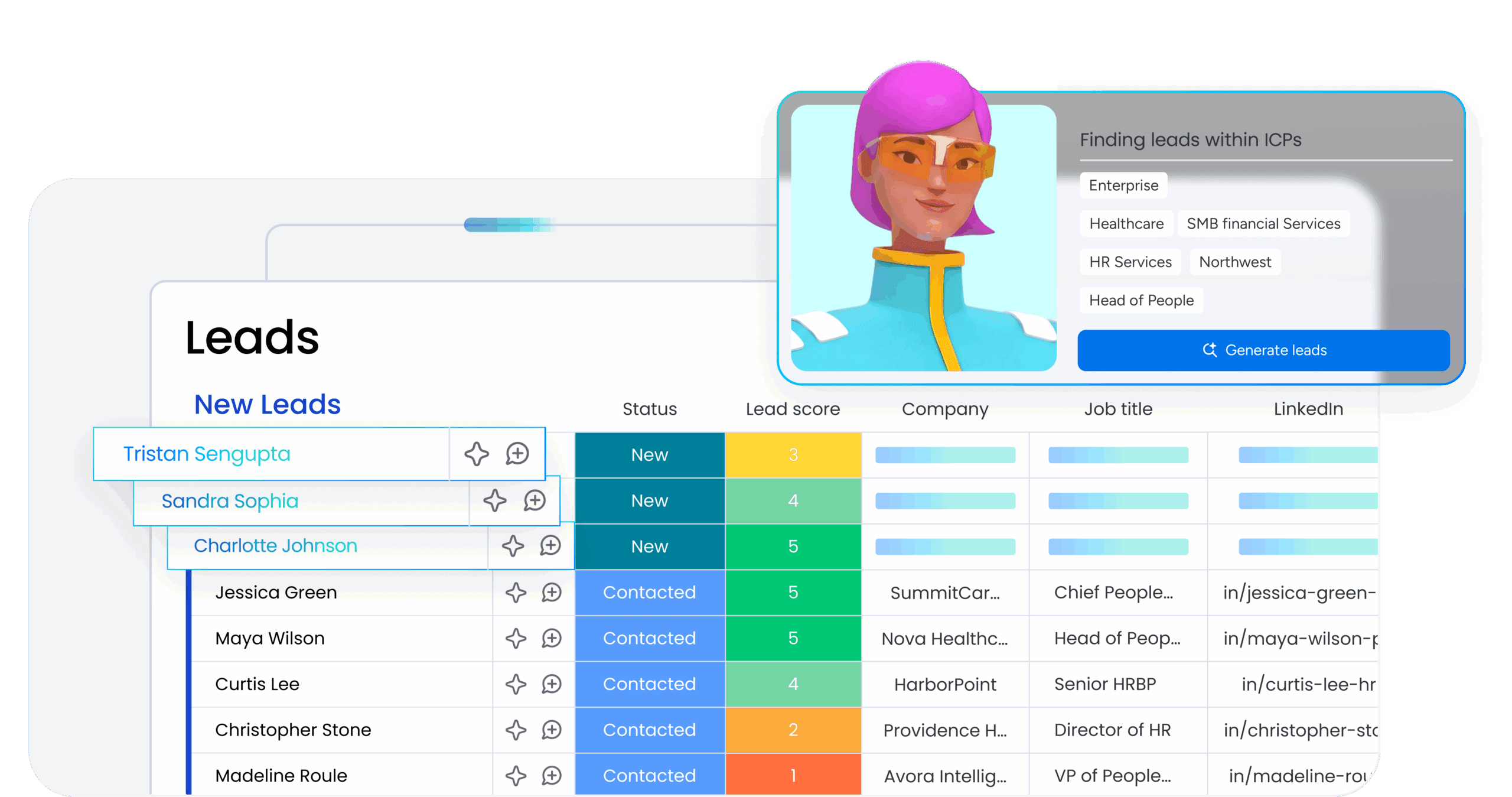

monday agents integrate into your existing workspaces, giving you AI capabilities that connect across departments without forcing teams to learn separate systems. Because agents work within the platform where your teams already manage projects, campaigns, tickets, and processes, adoption happens naturally rather than requiring wholesale workflow changes.

AI capabilities mapped to adoption strategy needs

monday agents deploy directly into your existing workspaces with ready-made capabilities for common workflows and a custom builder for specialized needs.

monday agents includes:

- Ready-made AI agents: For common functions like risk analysis, status reporting, meeting summarization, and ticket triage that deploy into existing workspaces without custom development

- Custom AI agent builder: Lets organizations build tailored agents in three steps: describe the role and triggers, connect relevant knowledge and integrations, then test and refine

Governance capabilities for enterprise-scale AI adoption

monday agents include enterprise-grade governance controls that give legal, security, and leadership teams confidence to scale AI across departments.

monday agents provides:

- Granular access controls: Defining who can use AI capabilities and which data agents can access

- Simulation mode: For testing agent behavior before production activation

- Complete audit trails: Supporting accountability and compliance reporting

- Enterprise certifications: Including SOC 2 Type II, ISO/IEC 27001, and HIPAA support

Turning AI strategy into sustainable competitive advantage

An AI adoption strategy is really an organizational transformation strategy. Technology matters, but the companies pulling ahead aren’t the ones with the fanciest AI models. They’re the ones redesigning how work gets done, investing in people alongside tech, and building governance that speeds things up instead of slowing them down. As AI shifts from helping to doing, companies with connected data, solid governance, and AI-fluent teams will scale impact exponentially.

Platforms like monday Work Management let you coordinate this transformation from strategy to execution, connecting people and AI agents with shared context and enterprise-grade security. With monday agents, you get AI capabilities that deploy directly into existing workspaces, making enterprise-scale adoption possible without forcing teams to learn separate systems.

Try monday agentsFAQs

How long does it take to implement an AI adoption strategy?

Most companies take 12–18 months to go from strategy to full-scale deployment, though you can see early wins in 2–3 months.

What budget should organizations allocate for AI adoption?

Budget for platforms, training, change management, and governance—not just software licenses. Companies that spend too much on tech and too little on people see lower adoption rates.

What governance controls are needed before scaling AI across departments?

You need granular permissions, audit trails for every AI action, human validation checkpoints, and compliance with standards like SOC 2 Type II and ISO 27001.

How do you handle employee resistance to AI adoption?

Address resistance with honest communication about how AI helps rather than replaces people. Add role-specific training, involve teams early in redesigning workflows, and show real examples of AI making work better.